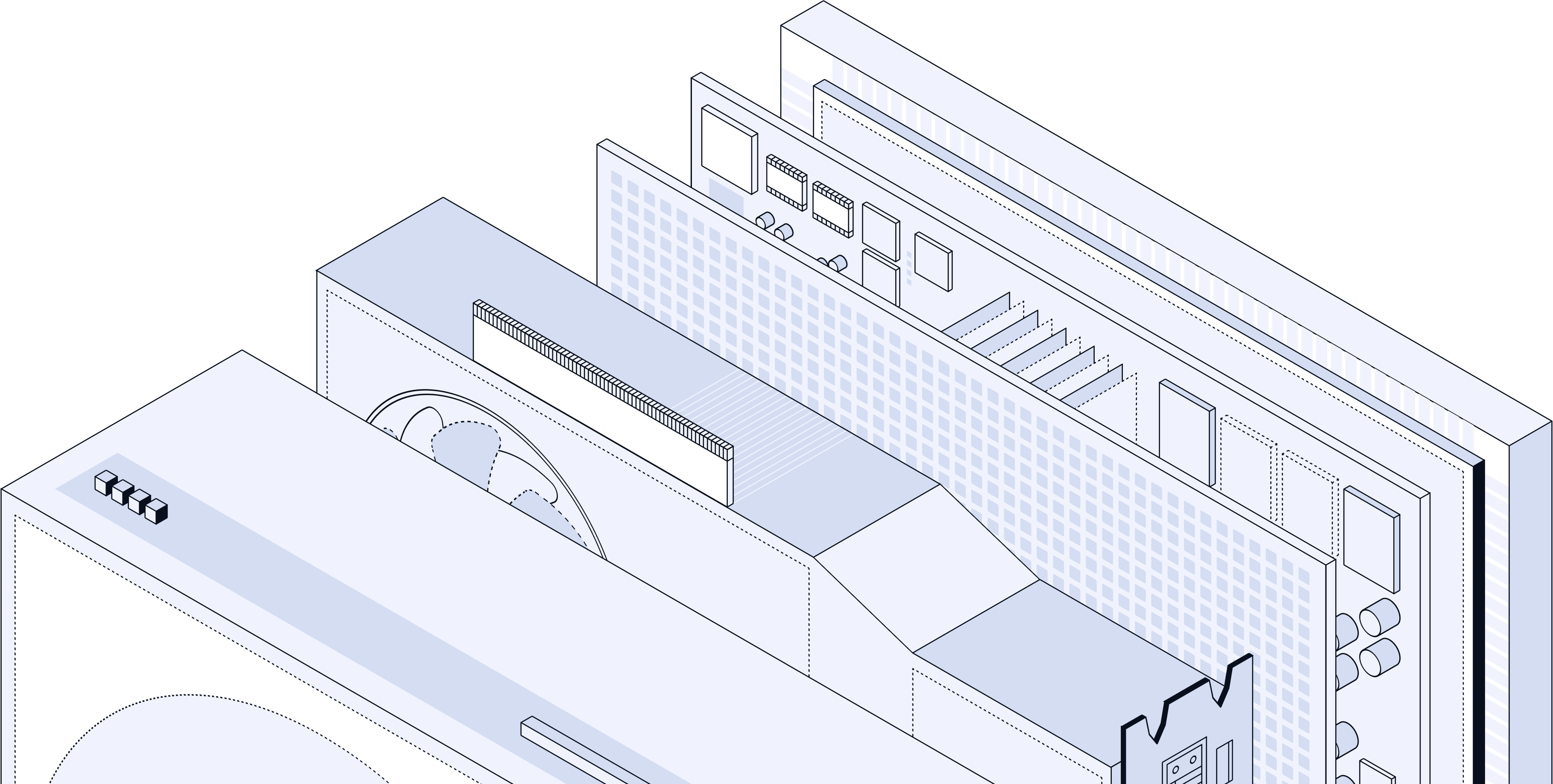

The engine behind AI deployment

Yutanix helps AI teams secure GPU capacity, stand up training and inference environments, and move with clearer deployment visibility across the infrastructure market.

Impact numbers for AI teams

Representative outcomes across GPU availability, deployment speed, and delivered compute for teams scaling real AI workloads.

Uptime delivered

Availability maintained across training and inference environments that need dependable performance.

Faster vs cloud

Time to usable capacity improves when teams move onto infrastructure matched to their workload profile.

Petaflops deployed

Delivered compute across dedicated environments supporting training, inference, and broader enterprise AI adoption.

Four core services for AI teams

Yutanix helps AI teams secure compute, stand up training environments, run production inference, and make smarter capacity decisions as usage grows.

How AI teams move with Yutanix

A practical engagement flow built around demand clarity, environment readiness, and dependable long-term operations.

Need clarity before you commit capacity?

Talk through compute timing, environment needs, and rollout priorities before infrastructure decisions become expensive to unwind.

Three ways teams engage Yutanix

Some teams need upfront guidance, some need environment design, and some need ongoing capacity decisions as demand evolves.

Deployment advisory

We help teams evaluate options, sequence rollout decisions, and choose the right infrastructure path before deployment begins.

Environment design

We shape training and inference environments around workload mix, performance needs, control requirements, and operating constraints.

Capacity management

We help teams monitor demand, plan growth, and adjust capacity strategy as workloads and enterprise adoption expand.

What AI teams say

Feedback from teams that needed dependable GPU access, clearer deployment decisions, and infrastructure that could keep up with training and inference demand.

They helped us secure the right GPU capacity without losing momentum on model development timelines.

The rollout was structured, fast, and much easier to operate than stitching providers together on our own.

Our inference environment stayed stable under load, which gave the team real confidence to scale.